1 Bigdata

BootcampBigdata2020-12-17

5 V

- volume

- velocity

- variety

data type

- structured data: csv, tsv, DB

- semi-structured data - 5-10%: logs, xml, json

- unstructured data: email, social media, sounds, vedio,images, word

Speed of data

- batch

- micro-batch

- real-time

Speed of data = from real time to batched processing

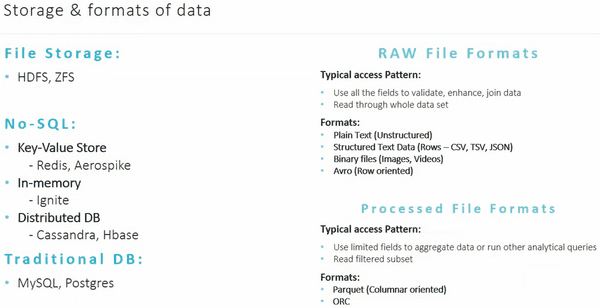

File Storage: HDFS, ZFS

No-SQL:

- `Key-Value Store`: Redis, Aerospike

- `In-memory`: Ignite

- `Distributed DB`: Cassandra, HBase

Tranditional DB: MySQL, Postgres

Raw File Formats

Typical access Pattern:

- Use all the fields to validata, enhance, join data

- Read through whole data set

Formats:

- Plain Text (unstructured)

- Structured Text Data (Rows - CSV, TSV, JSON)

- Binary files (images, videos)

- Avro (Row oriented)

Processed File Formats

Typical access Pattern:

- Use limited fields to aggregate data or run other analytical queries

- Read filtered subset

Formats:

- Parquet (Columnar oriented)

- ORC

Storage & Formats of data

- Fow Format - OOTP, query faster

- Columnar format - OLAP, historical/archive data

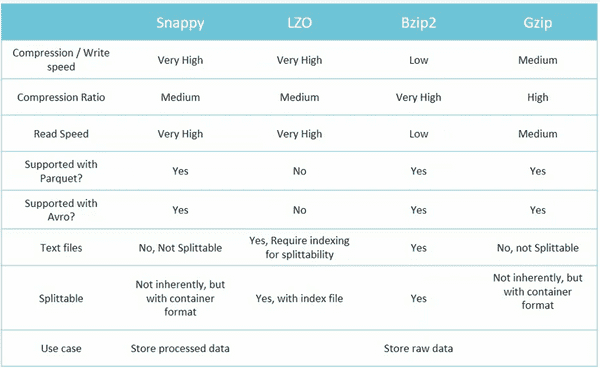

Data compression

Ideal Big Data solution - main technical characteristics

- Be scalable

- Fault Tolerant

- Ensure highly availability

- Ensure data is widely accessible, but secure

- Support analytics, data science, and content applications

- Support workflow automation

- Integrate with legacy applications

- Be self-healing (自愈)

Functions

- Data Collection

- Data Storage

- Data Exploration

- Data Governance

- Data Product

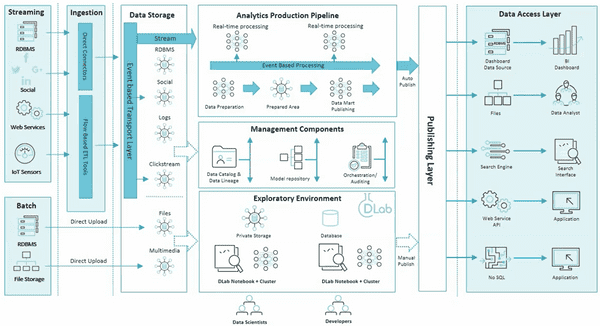

Blue Print

- ETL

- Streaming

- Batch

- Ingestion

- Exploratory Environment: development env using product data without impace product pipeline.

More

- Cloudera and Hortonworks are merged