201 Kafka 4

BootcampBigdata2020-12-17

Kafka

Apache Kafka is a distributed streaming platform

What is Kafka

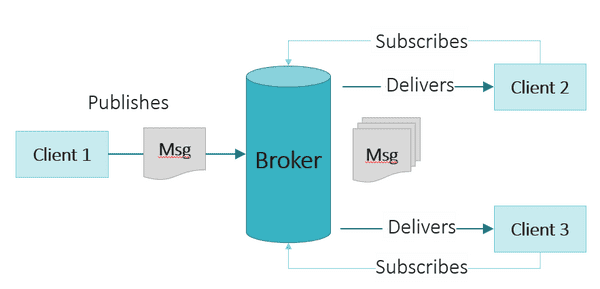

- Apache Kafka is a fast, scalable, durable, and fault-tolerant publish-subscribe messaging system

- Kafka is often used in place of traditional message brokers like

JMSandAMQPbecause of its higher throughput, reliability and replication - Kafka may work in combination with Storm, Spark, Samza, Flink, etc. for real-time analysis and rendering of streaming data

- Whatever the industry or use case, Kafka brokers massive message streams for low-latency analysis in Enterprise Apache Hadoop

What does Kafka do?

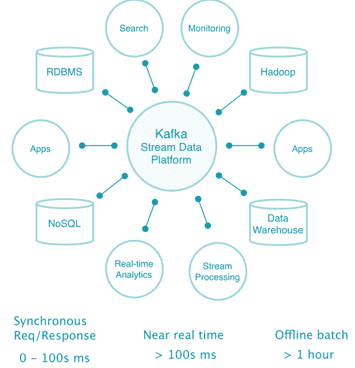

Apache Kafka supports a wide range of use cases where high throughput, reliable delivery, and horizontal scalability are important. Apache Spark and Apache Cassandra work very well in combination with Kafka.

Typical use cases include:

- Messaging

- Stream processing: pipeline, transfer, aggregate, lightweight library

kafka stream, in place of Apache storm, Apache Samsome - Metrics collection & monitoring

- Website activity tracking: pageviews, searching, user activity capture to topic

- Event sourcing (CDC)

- Log aggregation

- Commit log (log replication)

Qualities

- Scalability

- Durability (耐久力)

- Reliability

- Performance

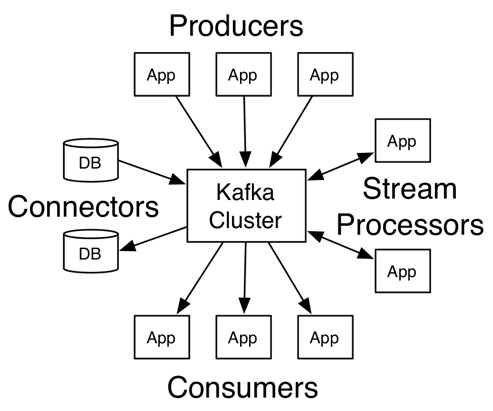

High-level overview

Kafka architecture

Core APIs

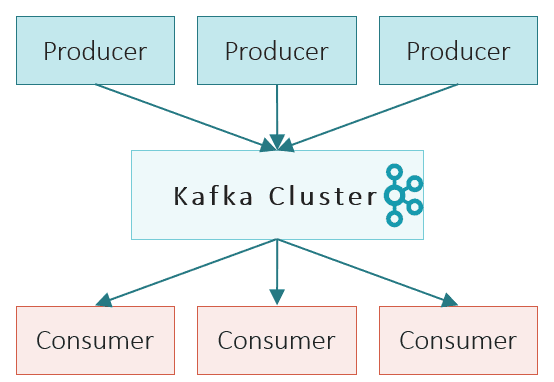

- The

Producer APIallows an application to publish a stream of records to one or more Kafka topics. - The

Consumer APIallows an application to subscribe to one or more topics and process the stream of records produced to them. - The

Connect APIallows building and running reusable producers or consumers that connect Kafka topics to existing applications or data systems. For example, a connector to a relational database might capture every change to a table. - The

Streams APIallows an application to act as a stream processor, consuming an input stream from one or more topics and producing an output stream to one or more output topics, transforming the input streams to output streams.

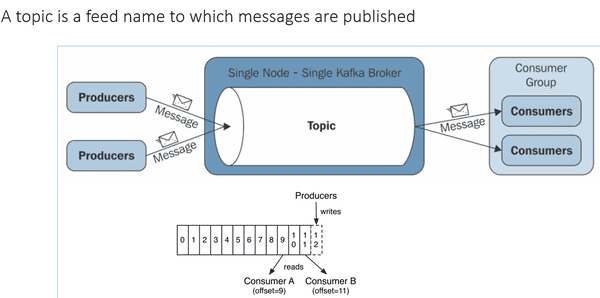

Topics

Patitions

- A topic consists 1 or more

partitions - Each partition is

ordered,immutablesequence of messages that is continually appended to a commit log

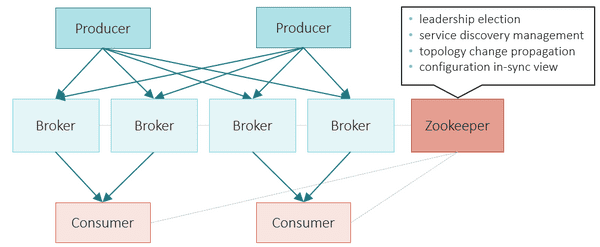

Kafka nouns

- topic, producer, consumer, broker, cluster,

- zookeeper, storm library, pub/sub

- Kafka is comparable to (相当于) traditional messaging systems such as

ActiveMQ - topics, patitions, Replicas, replication, in-sync replicas

- leader, follower

- load balance

- consumer group

- fault tolerance

- log compaction, log cleaner

- delivery semantics

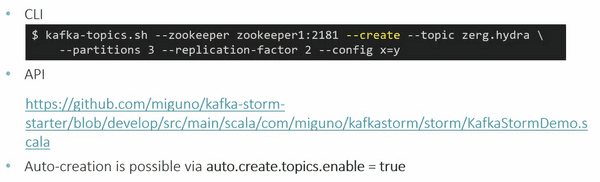

There are 3 ways topic can be created

Data serialization formats

- Kafka does not care about data format for a message payload

- it is up to developer to handle serialization/deserialization.

- common choices in practice: Avro, JSON

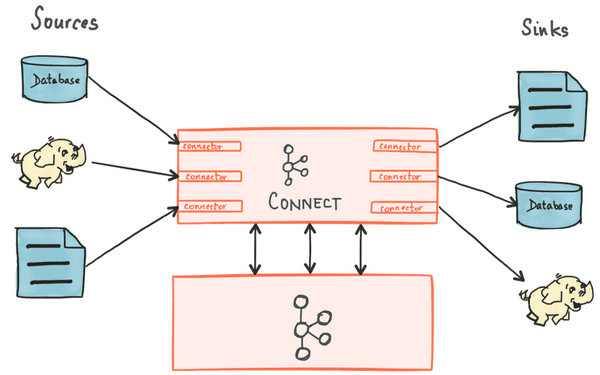

Kafka connect

Connectors– the high level abstraction that coordinates data streaming by managing tasksTasks– the implementation of how data is copied to or from KafkaWorkers– the running processes that execute connectors and tasks: standlone and distributed.Converters– the code used to translate data between Connect and the system sending or receiving dataTransforms– simple logic to alter each message produced by or sent to a connector

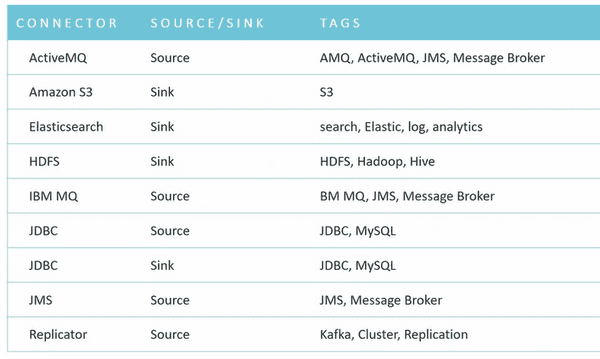

Standard confluent connectors

System Tools

- Kafka Manager

- Consumer Offset Checker

- Dump Log Segment

- Export Zookeeper Offsets

- Get Offset Shell

- Import Zookeeper Offsets

- JMX Tool

- Kafka Migration Tool

- Mirror Maker

- Replay Log Producer

- Simple Consumer Shell

- State Change Log Merger

- Update Offsets In Zookeeper

- Verify Consumer Rebalance

Monitoring & Configuration

Use of standard monitoring tools is recommended

-

Graphite

- Puppet module: https://github.com/miguno/puppet-graphite

- Java API, also used by Kafka: http://metrics.codahale.com/

-

JMX

Collect logging files into a central place

- Logstash/Kibana and friends

- Helps with troubleshooting, debugging, etc. – notably if you can correlate logging data with numeric metrics

Q/A

- ISR: Intra-cluster Replication

Ecosystem

- https://cwiki.apache.org/confluence/display/KAFKA/Ecosystem

-

zookeeper

$ kafka-topics.sh --zookeeper zookeeper1:2181 --create --topic zerg.hydra \ --partitions 3 --replication-factor 2 --config x=y - istio