201 Streaming 5

BootcampBigdata2020-12-17

Essential concepts in Spark Streaming:

- StreamingContext

- Stream Operators

- Batch, Batch time and Job Set

- Streaming Job

- Discretized Streams (Dstream) 离散

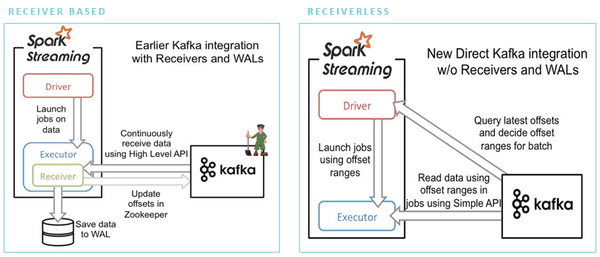

- Receivers

Discretized Stream (DStream) is the fundamental concept of Spark Streaming. It is basically a stream of RDDs with elements being the data received from input streams for batch (possibly extended in scope by windowed or stateful operators).

Spark streaming

- Provides a way to consume continuous stream of data

- Scalable, high-throughput, fault-tolerant

-

Built on top of Spark Core

- Based on RDDs

- API is similar to Core API

- Supports many inputs

- Integrates with other Spark modules

- Micro batch architecture

Spark sources

-

Basic sources

- Packaged together with Spark Streaming library

- Available through

- Socket Streams, File Streams, Actor Streams

-

Advanced sources

- Packaged separately

- Available through utility classes

- Kafka, Flume, Kinesis, Twitter

- Custom sources